INDUSTRY INSIGHTS

AX, EX, OX, and PX: Towards a Frictionless Publishing Experience

A reflection from our session at the London Book Fair 2026

Neelanjan Sinha

VP – Product & Technology

At a time when nearly every conversation begins (and sometimes ends) with AI, we wanted to anchor our talk at this year’s London Book Fair around experience instead. Not because AI is unimportant. In 2026, that hardly needs saying. But in real publishing workflows, we keep seeing something else more clearly:

Experience might be the more urgent problem, and the more powerful lever.

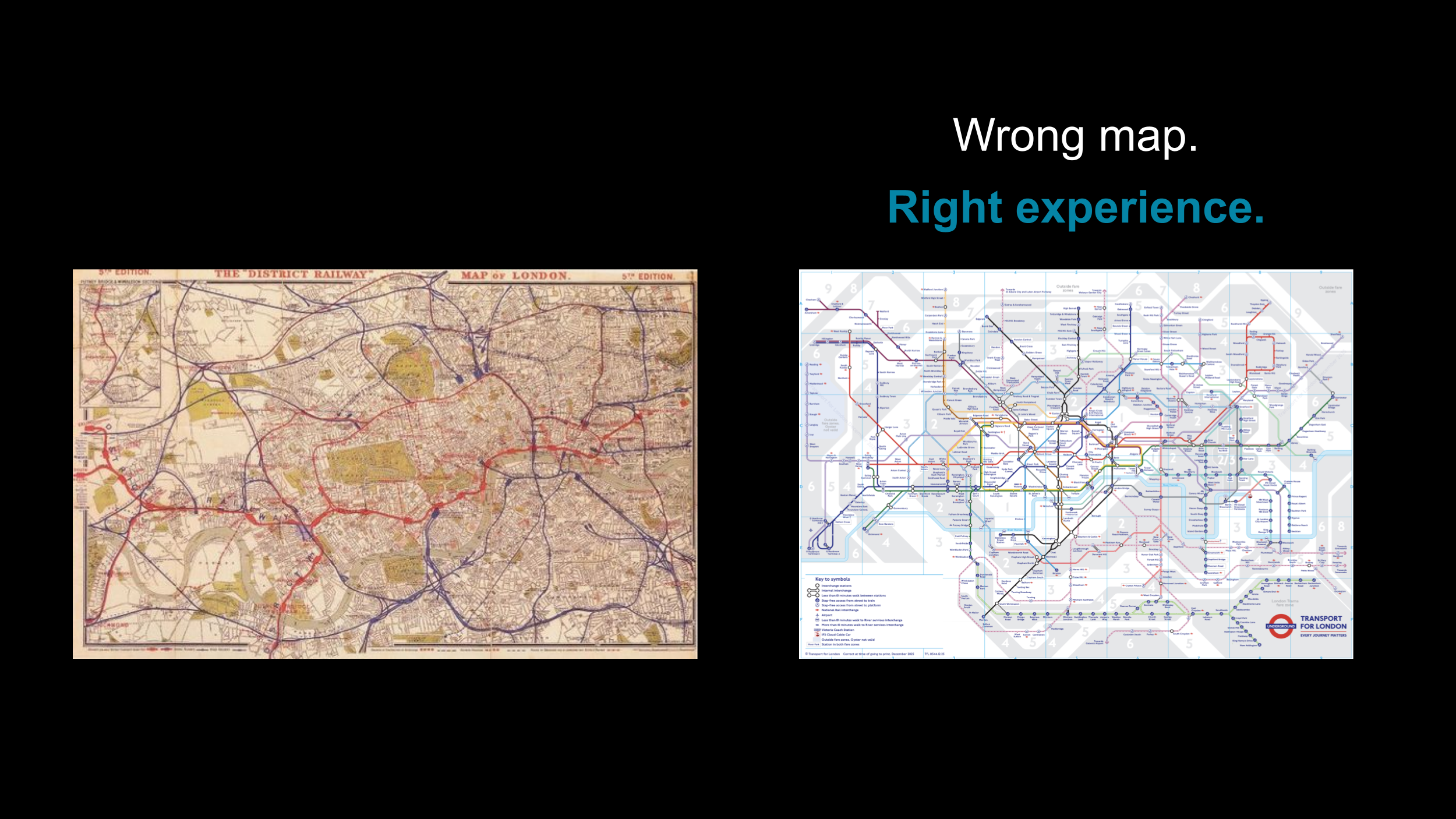

A wrong map that works

The evening before our session, we were on the London Underground, staring at the Tube map above the doors, and a thought landed that ended up reshaping the next morning’s talk.

The Tube map is wrong. Geographically, technically, factually, almost nothing on it matches reality. Distances are distorted, angles are invented. Laid over an actual map of London, the two would barely agree.

And yet, millions of people navigate one of the world’s most complex transit systems with it every day.

In 1933, an engineering draughtsman named Harry Beck made a radical choice. He threw out the geography, straightened the lines, evened the spacing, and stripped the map down to what a traveller actually needed: not where things are, but how to get between them. The result was technically wrong. But experientially right. He didn’t change a single train line. He changed how people experienced the system.

That idea, that something technically incorrect can be experientially superior, felt surprisingly relevant to what we were about to present. Because in publishing too, the instinct is usually to make things more accurate, more detailed, more complete. But sometimes the better intervention is not to add more. It is to redesign how people see the work, move through it, and make decisions within it.

The friction that metrics miss

Publishing systems work. Often quite well. But underneath the surface, there’s a layer of effort that rarely shows up on any dashboard: copy editors working around inconsistent style guides, operations teams managing exceptions invisible to everyone else, and authors struggling with submission guidelines that were written for the system rather than for them.

The turnaround looks fine. The quality score passes. But how did we actually get there?

The useful kind of friction in this system, the kind that shows up when an editor questions a reference or catches an error? That friction should stay. The dangerous kind is the friction that forces skilled people to quietly absorb effort that the system should have handled. And the better they are at it, the more invisible it becomes.

Efficiency and experience are not the same thing. A system can look fast and still feel broken to the people inside it.

And when the people carrying that hidden effort leave, or when volume scales, or when AI changes the nature of the task, the fragility surfaces. By then, the problem becomes an expensive one to fix.

Four lenses, one system

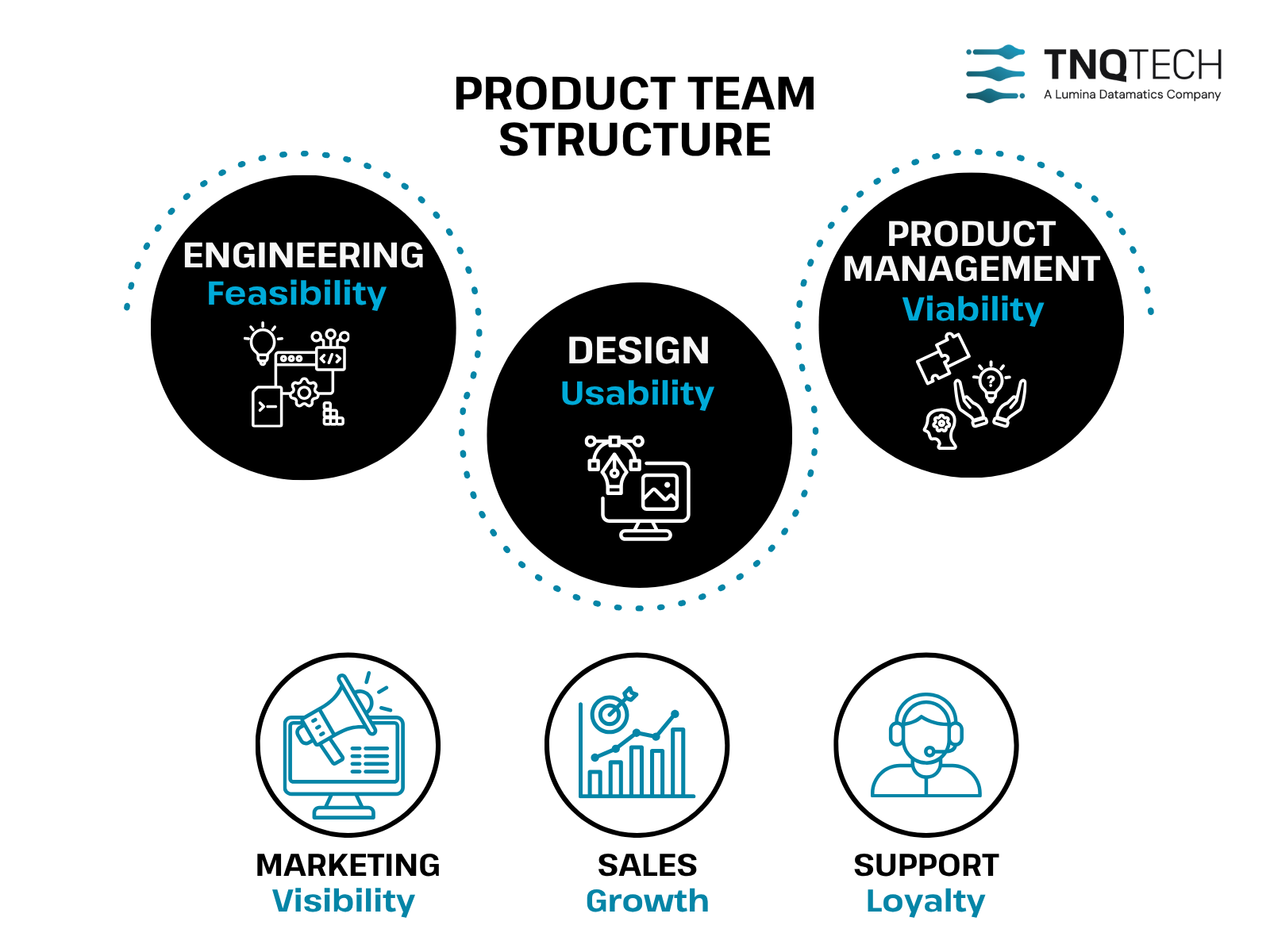

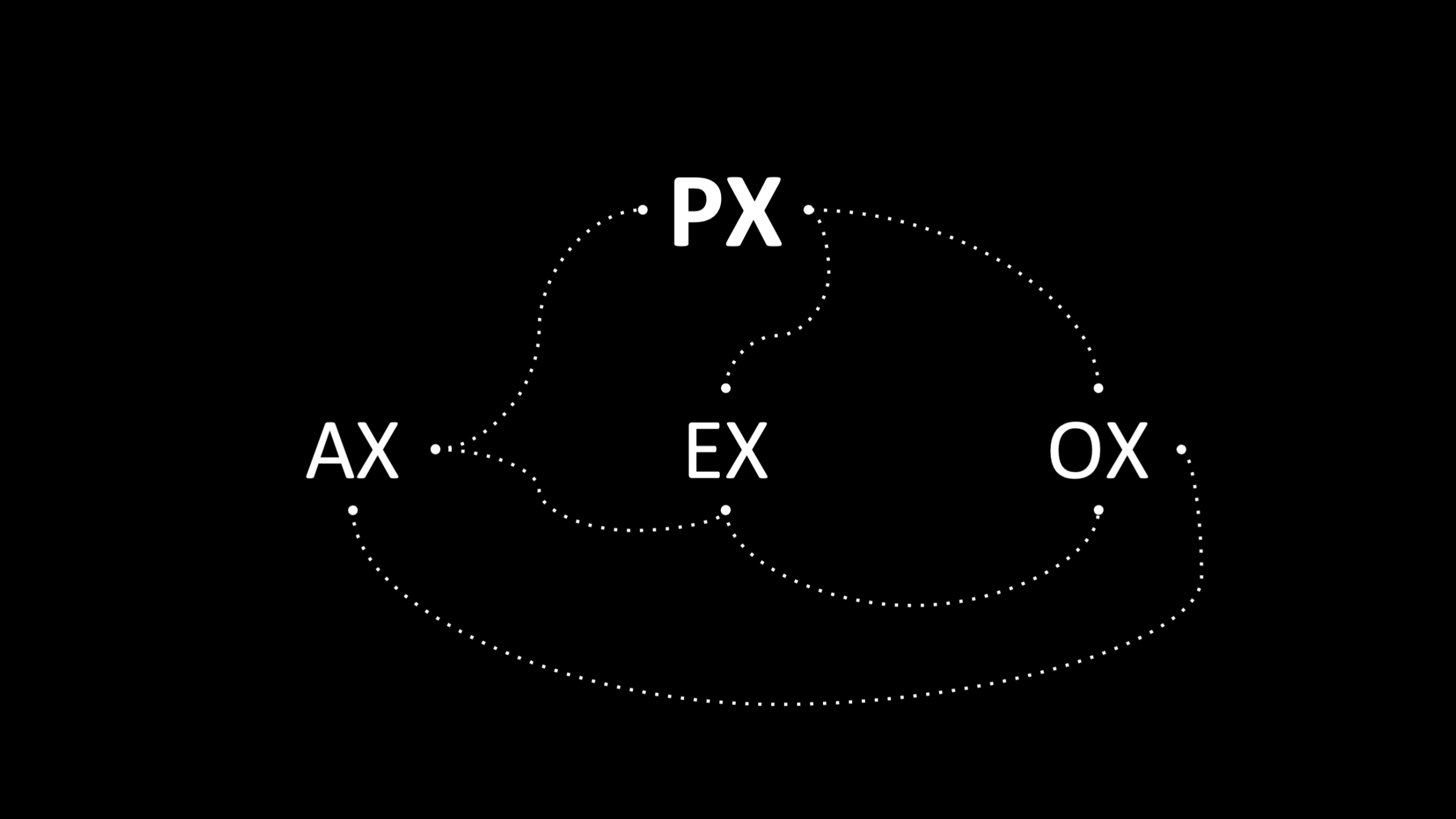

We’ve been thinking about experience through four connected lenses. We call them AX, EX, OX, and PX.

AX (Author Experience): what it feels like to submit, respond to queries, navigate the publishing process.

EX (Editorial Experience): what it feels like to review, copy edit and exercise judgement, especially now, when the task increasingly involves evaluating AI output rather than producing the work yourself.

OX (Operations Experience): what it feels like to make the system actually work across exceptions, handoffs, and invisible dependencies.

PX (Publisher Experience): whether publishers can see the system clearly enough to steer it.

These aren’t standard industry terms. We framed them over time. Experience looks different depending on where you stand in the workflow. When you only optimise at an aggregate level, you miss the friction each persona is quietly carrying.

No single persona sees the whole system. Each experiences a part. And when you optimise one layer in isolation, friction doesn’t disappear. It simply moves elsewhere.

We saw this clearly in a discussion Shanthi was leading around simplifying a publisher’s 12-page author submission guideline. From the author’s point of view, nearly every section seemed necessary. From an operations point of view, the instinct would have been to compress it into a single-page document. That tension between what’s right for one persona and what works across the system doesn’t get solved by better tools, it gets solved by understanding how the layers connect, and what each persona actually needs in order to do their work well. That’s a design problem, not a technology problem.

These layers reinforce each other. When author experience is poor, editorial inherits the mess. When editorial comes under strain, operations absorb it. And when operations are constantly patching invisible gaps, the publisher’s view of the system becomes less reliable.

It compounds the other way too. Improve the experience at one layer thoughtfully, and the benefits propagate across the others.

Why this matters even more with AI

Think about an F1 pit stop: twenty-one people, under two seconds. Every person knows exactly when to move and when to stay still. That’s orchestration. And orchestration is exactly what’s missing when AI enters most publishing workflows.

When we test AI one task at a time, each component may pass. But place them in a real workflow, with real people and real judgement calls, and the system behaves differently. The components were optimised, the orchestration wasn’t. The real question is judgement across the handoffs: when should the system pause, escalate, or involve a human? In publishing, a confident answer isn’t always a safe one.

There’s a cognitive shift here that doesn’t get discussed enough. When you’re copy editing, you build understanding as you go: the argument, the structure, the author’s voice. When you’re reviewing AI output, you need that same understanding while simultaneously evaluating whether each change is right, whether something was missed, whether context was misunderstood. It’s a different task. Harder in some ways. And if the experience around that task is poorly designed, the AI’s capability never translates into workflow value.

We hear “human in the loop” as if it solves the problem. But placing a human in the loop and designing the loop so the human can actually exercise judgement are two very different things. Without the second, it becomes theatre. It looks responsible, but it doesn’t improve the outcome.

AI doesn’t reduce the need for well-designed experience, it raises it. The questions that stay on the table longest aren’t about capability. They’re about trust. Can we trust what we deploy? Will it hold at scale, in the thick of daily production? Will the people working with AI output have the clarity and confidence to do their job well?

Those questions don’t get answered by a better model. They get answered by how well the experience around the AI is designed.

So our position is experience-first, AI-enabled. In many of our product decisions, we deliberately step back before reaching for engineering and ask: is this actually an experience problem? Often, the fix lives in design, clarity, or workflow, not in more technology. That matters, because if the problem is rooted in experience, engineering alone won’t solve it, and may mask it. That discipline shapes how we think about where AI belongs, and where it doesn’t.

It’s also why we’re currently undergoing a formal responsible AI assessment toward ISO 42001 certification for ‘AI management system’, not as a badge, but as a forcing function. The process is making us examine how we handle data, transparency, bias, and human oversight across our workflows. In an industry built on research integrity, responsible AI is a prerequisite for trust, not just a nice-to-have.

What we keep coming back to

- Friction reveals where systems leak effort.

- Experience shows where people absorb it.

- Design helps remove the wrong kind of friction and keep the right kind.

AI doesn’t change these principles, it raises the stakes.

We don’t have it all figured out, but if any of this resonates, here’s a question worth sitting with:

Where in your workflow are people absorbing effort that the system should carry? And is the fix you’re reaching for aimed at the right layer?

If any of this sparked a thought, a question, or a disagreement, we’d love to hear from you.

This post is based on our session at the London Book Fair 2026, presented by Shanthi, Jessica, and Neel.