CORPORATE

TNQTech Earns ISO/IEC 42001:2023 Certification for Responsible AI

Srividya Tadepalli

Communications Manager – Tech & Product

13 May 2025, Chennai

TNQTech has been awarded the ISO/IEC 42001:2023 certification for its AI Management System, among the first technology organisations in scholarly publishing to meet this standard.

What Responsible AI Looks Like in Practice at TNQTech

A framework for the entire lifecycle of AI systems

TNQTech’s framework for responsible AI extends across the entire lifecycle of an AI system: from data collection and model training, to model selection, deployment, monitoring, evaluation, and continuous improvement.

Cross-functional teams that maintain accountability systems

TNQTech’s AI governance model is championed by the Integrated Management System (IMS) team.

“Our pace and scale of innovation, especially with regard to AI adoption, has to be matched by how robust our accountability structures are. This certification validates the work we’ve been doing to ensure that our AI systems are governed responsibly.”

Prabhakar Ramakrishnan | CISO & AVP - IT Infrastructure, TNQTech

Our AI Lab, one of the many manifestations of this model, is both an innovation engine and a guardian for the organisation’s responsible ideation, deployment, and evaluation of AI.

The R&D function helps establish governance frameworks, validation methodologies, and risk-aware deployment practices.

“We are constantly striving to make sure that our AI solutions are scalable, transparent, and aligned with business, customer, and regulatory expectations..”

Rajalakshmi Karat | AVP - R&D, TNQTech

A cross-functional internal steering committee, with support from the IMS team, R&D, and the AI Lab, oversees the implementation of the internal policies, guardrails, evaluation frameworks, and operational standards that every AI initiative within the organisation must follow.

Explainability and traceability in our products

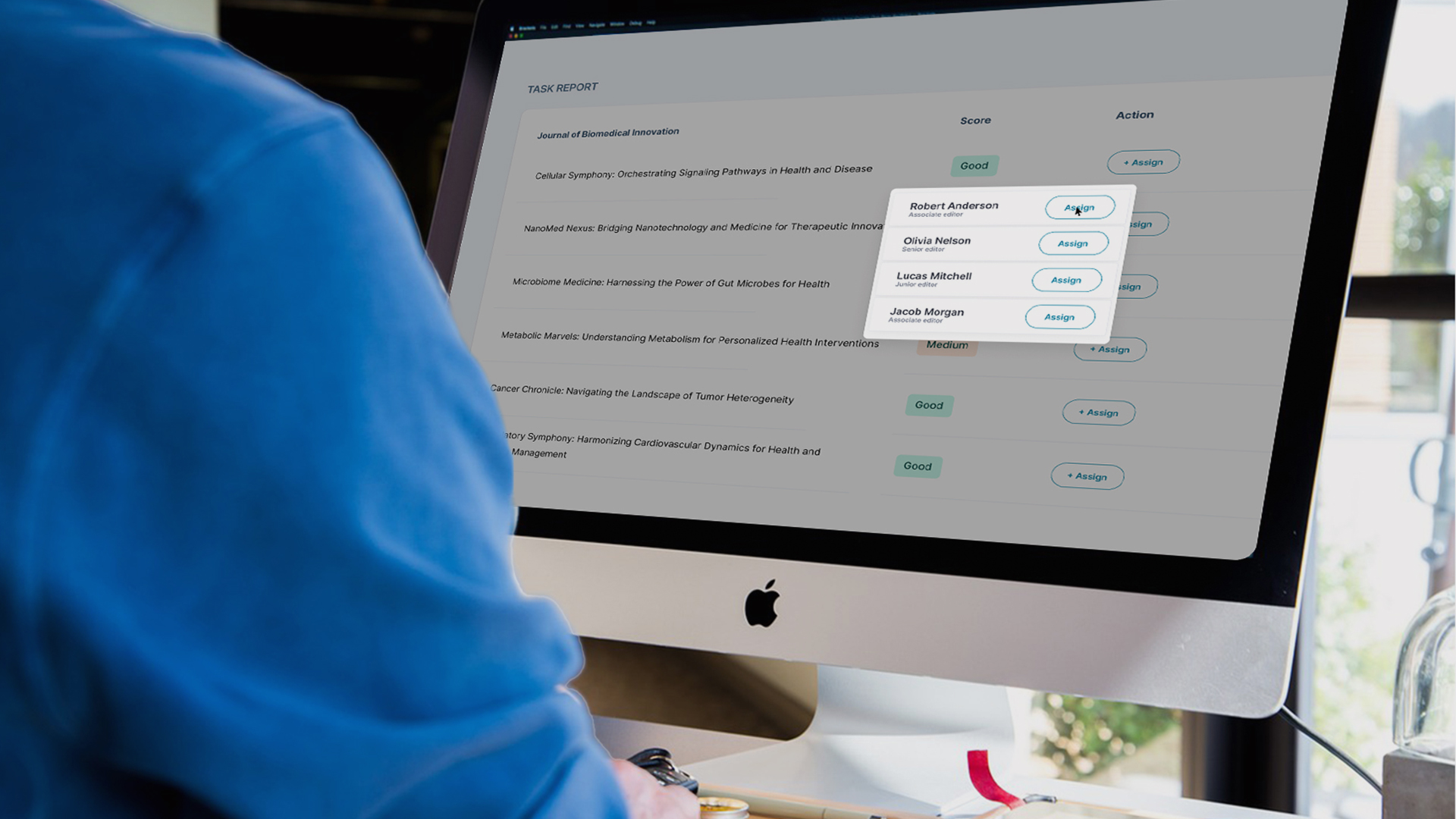

Our AI-enabled products are designed to be clear, accountable, and easy to use. We provide users with simple guidance on what each AI system does, how it should be used, and what to keep in mind while using it.

Robust guardrails and continuous evaluation of AI systems

Having guardrails in place and conducting regular assessments of AI systems are an important part of responsible governance. Assessments are carried out by internal and external auditors to help ensure that our AI systems continue to operate reliably. Proactive risk assessment and mitigation processes help identify, assess, and address potential risks. The IMS team enforces security and privacy controls to safeguard data and information used across AI-enabled systems and workflows.

Human judgement at the helm

As a technology partner to scholarly publishers, we know the importance of safe and reliable AI. Our products and processes are designed to have a human-in-loop wherever necessary, with AI as an invisible layer that supports the people in charge.

Strengthening overall organisational resilience

Through our Integrated Management System, all systems and functions are aligned towards the common objective of enhancing the overall resilience of the entire organisation. In addition to being ISO/IEC 42001 certified, TNQTech is also certified on the following standards:

- ISO 27001: Information security management system

- ISO 27701: Privacy information management system

- ISO 22301: Business continuity management system

Smart, Safe, and Boring AI

“In scholarly publishing, AI has to operate within real workflow, quality, and accountability constraints. Most of the work that makes AI reliable is invisible: validation loops, governance decisions, and trade-offs between what is technically possible and what is operationally responsible. We call these the boring parts of AI. They are what the smart, visible part stands on.”

Neelanjan Sinha | VP, Product & Technology, TNQTech